Data Quality on Read

A Practical Guide to Mask-Based Data Profiling

Andrew Morgan

First Edition — 2026

Copyright

Data Quality on Read: A Practical Guide to Mask-Based Data Profiling

© 2026 Andrew Morgan

This work is licensed under the Creative Commons Attribution 4.0 International License (CC BY 4.0).

You are free to:

- Share — copy and redistribute the material in any medium or format

- Adapt — remix, transform, and build upon the material for any purpose, including commercially

Under the following terms:

- Attribution — You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

Full license text: creativecommons.org/licenses/by/4.0

Author: Andrew Morgan

Publisher: Andrew Morgan

First edition: 2026

Tools: dataradar.co.uk · github.com/minkymorgan/bytefreq

Source: github.com/minkymorgan/DataQualityOnRead

Contact: andrew@gamakon.ai

Foreword

Every organisation that works with data eventually discovers the same uncomfortable truth: the data is not what the documentation says it is. The specification describes an ideal. The file contains reality. The gap between them is where projects stall, budgets overrun, and decisions go wrong.

In nearly two decades of building data platforms — across financial services, government, telecoms, and open data — I have seen this gap consume more time, money, and goodwill than any other single problem in data engineering. Not because the problem is hard to understand, but because the tools for discovering it have historically been slow, expensive, and assumption-heavy. You needed to know what you were looking for before you could look for it.

Mask-based profiling inverts that assumption. It asks no questions about the data. It makes no assumptions about what the data should contain. It simply translates every value into its structural fingerprint and counts the results. The dominant patterns tell you what the data is. The rare patterns tell you what has gone wrong. The technique is mechanical, deterministic, and fast — and it works on any data, in any language, at any scale.

This book describes the technique, the architecture that surrounds it, and the open-source tools that implement it. It is written for practitioners: data engineers, analysts, and anyone who has ever opened a file and wondered what they were looking at. The ideas are simple. The implementation is straightforward. The impact, in my experience, is transformative.

I hope you find it useful.

Andrew Morgan February 2026

Introduction

In 2007, while working on a data migration for a financial services client, we received a file that was described as containing customer addresses. The specification said the fields were fixed-width, ASCII-encoded, with UK postcodes in column 47. When we loaded the file and profiled it, we discovered that column 47 contained a mixture of valid postcodes, phone numbers, the string "N/A" repeated 11,000 times, and — in one memorable case — what appeared to be someone's lunch order.

The specification was wrong. Or rather, the specification described what the data should look like, and the file contained what the data actually looked like. These are not the same thing, and the gap between them is where data quality lives.

This experience, repeated in various forms across financial services, telecoms, government, and open data projects over nearly two decades, led to the development of a simple but surprisingly powerful technique: mask-based data profiling. The idea is straightforward. Take every character in a data field and translate it to its character class — uppercase letters become A, lowercase become a, digits become 9, and everything else (punctuation, spaces, symbols) stays as it is. The result is a structural fingerprint of the value, a mask, that strips away the content and reveals the shape of the data underneath.

When you profile a column by counting the frequency of each mask, patterns emerge immediately. The dominant masks tell you what the data is supposed to look like. The rare masks — the long tail — tell you where the problems are hiding. No regex, no schema, no assumptions about the data required. Just a mechanical translation that lets the structure speak for itself.

This book describes that technique, the architecture around it, and the tools that implement it. The technique itself is called Data Quality on Read (DQOR), a deliberate parallel to the "Schema on Read" principle that underpins modern data lake architectures. The core idea is the same in both cases: accept raw data as-is, defer processing until the moment of consumption, and never overwrite the original. In the schema case, you defer structural interpretation. In the quality case, you defer profiling, validation, and remediation. The benefits are the same: agility, provenance, and the ability to reprocess history when your understanding improves.

The tools are bytefreq, an open-source command-line profiler now implemented in Rust, and DataRadar, a browser-based profiling tool that runs entirely client-side using WebAssembly. Both implement DQOR from the ground up, and both are free to use.

The book is structured in three parts. Part I sets out the problem: why data quality is hard, and why the traditional approaches — schema validation, statistical profiling, regex-based checks — leave gaps that mask-based profiling can fill. Part II introduces the technique in detail: masks, grain levels, Unicode handling, population analysis, error codes, and treatment functions. Part III describes the architecture that ties it all together: the flat enhanced format (a trick borrowed from Hadoop-era feature stores), and the tools that implement it at different scales.

The intended audience is anyone who works with data they did not create: data engineers, analysts, scientists, and the growing number of people who find themselves responsible for data quality without having chosen it as a career. The technique is simple enough to prototype in a single line of sed, and powerful enough to run in production at enterprise scale. We will cover the full range.

Discovery Before Exploration

Before you profile the values in a field, you need to know what fields exist and how populated they are. This sounds obvious. It is obvious. And yet the most common mistake in data quality work is to dive straight into field-level analysis — examining the values in a column — without first understanding the shape of the dataset as a whole. Structure discovery comes before content exploration. Always.

For tabular data — CSV files, fixed-width extracts, pipe-delimited feeds — this means counting non-null values per column. If a dataset has 55 columns but only 20 of them are more than 50% populated, that fact alone reshapes your entire profiling strategy. You do not need to know what is in the other 35 columns yet. You need to know they are mostly empty. That knowledge takes seconds to acquire and saves hours of misdirected effort.

For nested data — JSON, XML, hierarchical formats — the same principle applies, but the discovery step is different. You walk the structure to find every field path, then count how many records contain each path. A JSON feed might have 200 distinct field paths, but any given record might populate only 40 of them. A field that appears in 10% of records tells you something important before you have looked at a single value. A field that appears in 100% of records tells you something different. The population profile across all paths is the first thing you need, and the last thing most people think to check.

Think of it as a census before a survey. The census maps the territory: what exists, where it is, how much of it there is. The survey examines individual items in detail. Running the survey without the census means you do not know what you are missing, what you are over-sampling, or where your effort is best spent. The field population profile is the map. Profile without it and you are navigating blind.

This principle prevents wasted effort in both directions. Profiling a field that is 99% empty is rarely the best use of your time — you will generate a mask frequency table dominated by a single empty-value pattern and learn almost nothing. Conversely, discovering that a field described as "mandatory" in the specification is only 45% populated is itself a significant data quality finding — and you found it in the discovery phase, before spending any time on content analysis. Some of the most valuable insights come from the map, not from the territory it describes.

The worked examples in this book follow this principle explicitly. Each begins with a structure discovery phase — field counts, population rates, structural metadata — before moving to field-by-field mask analysis. This is not a stylistic choice. It is the method. Discovery before exploration, every time.

Data Quality Without Borders

The world's largest generators and consumers of data are in the public sector. Central, regional, and local governments manage millions of data transfers across ministerial boundaries every day. This separation of concerns makes government the single largest environment where Data Quality on Read is most urgently needed.

The stakes are high. "Single view of citizen" systems help governments deliver better services and ensure people do not fall between the cracks. But building these views requires integrating data from systems that were never designed to talk to each other, encoded in formats nobody fully documented. And because the data is personally identifiable, access to view raw records is rightly restricted — making data quality work uniquely difficult. You need to understand structure and quality without seeing content. This is where mask-based profiling shines: a mask reveals the shape of the data without exposing whose data it is.

The 33 languages in DataRadar's first tier of localisation — from English and French to Amharic, Hausa, Swahili, Tamil, Nepali, and Chinese — cover approximately 5.5 billion citizens. Data quality tools have historically supported only English interfaces and Latin-script datasets. A civil servant in Addis Ababa profiling census data in Amharic, or a local government analyst in Lagos working with Hausa-language records, had no tools built for them. DataRadar and bytefreq are.

All citizens deserve effective government services, and data quality is a prerequisite for delivering them. Multilingual, privacy-first, zero-install tools that work across scripts, languages, and borders — that is the ambition.

From Files to Services

The techniques in this book can profile a single file in seconds. But the real prize is bigger than files.

Consider a government department that receives data from dozens of external collectors — local councils, NHS trusts, schools, partner agencies — and publishes onward to downstream consumers. How does a Chief Data Officer know whether the department is producing good quality data? How does a CTO assure the service, not just individual datasets?

The answer is to treat data quality profiling as infrastructure, not as a one-off activity. The building blocks described in this book — mask-based profiling, the flat enhanced format, population analysis, assertion rules — are designed to assemble into a monitoring architecture that can assure an entire data service.

The pattern has two sides.

Exit checks run at the point of production. Before a department publishes a data feed, the profiling engine runs against the output and generates a quality report — mask distributions, population rates, character encoding composition, assertion rule results. This report is stored as a timestamped fact record. Over weeks and months, a timeseries builds up: a continuous measurement of what the department is actually shipping.

Entrance checks run at the point of consumption. When a downstream system receives a data feed, the same profiling engine runs against the input. The entrance report is compared against the expected baseline (derived from the exit checks or from an agreed specification). Deviations are flagged. New masks appearing, population rates dropping, encoding shifts — all are detected automatically, before the data enters the consumer's pipeline.

Between these two checkpoints, something powerful emerges: line of sight. When a downstream system encounters a quality issue, the entrance check report traces it back to the feed. The feed's exit check report traces it back to the collector. The timeseries shows when the problem started. Connected to lineage tools that track data flow across systems, this creates an automated root cause analysis — not "something is wrong somewhere" but "this specific field in this specific feed from this specific collector started producing a new mask pattern on this date, and the downstream impact is quantifiable."

That quantification matters. When you can say "Department X's data collection issues caused 2,000 downstream failures last quarter, costing an estimated £Y million in rework, delayed decisions, and incorrect outputs," the conversation changes. Quality stops being an abstract concern and becomes a line item. The timeseries is the measuring stick that makes consistent performance conversations possible — not blame, but evidence.

This reframes the purpose of data quality. The traditional question is: "Is this data fit for purpose?" — meaning, can the immediate consumer use it? The better question is: "Is this data fit for the journey?" Data rarely has one consumer. A dataset collected by a local council may pass through a regional aggregator, a central government platform, a statistical publication pipeline, and a public API before reaching its final consumers. Quality at the point of collection is not enough if the data degrades, is misinterpreted, or hits structural incompatibilities at any stage of that journey. Fit for the journey means the data carries enough structural metadata — masks, population profiles, assertion results — to be understood and validated at every stage, by every hand it passes through.

The profiling reports described in this book — both the DQ mask frequency tables and the CP character profiling reports — are the raw telemetry for this monitoring architecture. They are structured, machine-readable, and designed to be stored and queried as fact tables. The technical implementation — time-partitioned directories, DuckDB queries, KPI dashboards — is covered in the Quality Monitoring chapter in Part III.

The tools in Part III are the building blocks. The architecture they enable is a data quality assurance service: continuous, measurable, and accountable.

Let's begin.

Why Data Quality Still Breaks Things

There is a widely cited statistic that poor data quality costs organisations between 15 and 25 percent of revenue. The number has been repeated so often that it has become background noise, the kind of thing people nod at in presentations and then promptly ignore when scoping their next project. The reason it persists, despite everyone knowing about it, is structural: most data quality problems are invisible until they cause a failure downstream, and by then the cost of remediation is orders of magnitude higher than the cost of early detection.

Consider a simple example. A retail company receives a daily feed of product catalogue updates from a supplier. The feed is a CSV file, delivered via SFTP, containing product codes, descriptions, prices, and stock levels. The specification says prices are in GBP, formatted as decimal numbers with two decimal places. For six months the feed arrives on time, the prices parse correctly, and everyone is happy. Then one Monday the supplier's system is upgraded, and the price field starts arriving with a currency symbol prefix — £12.99 instead of 12.99. The downstream pricing engine, which casts the field to a numeric type, throws a parse error. The product catalogue goes stale. Customer-facing prices are wrong for four hours until someone notices and writes a hotfix.

The fix takes ten minutes. The investigation takes two hours. The post-incident review takes half a day. The customer complaints take a week to resolve. The root cause was a single character in a single field, and the total cost — in engineering time, reputational damage, and operational disruption — was wildly disproportionate to the simplicity of the underlying issue.

This pattern repeats across every industry that depends on data received from sources it does not control. The specific failures vary — date formats flip between DD/MM/YYYY and MM/DD/YYYY, encoding shifts from UTF-8 to Latin-1, a column that was always numeric starts containing the string NULL instead of an actual null — but the shape of the problem is always the same. Data that was assumed to be clean turns out not to be, and the assumption is only tested at the point of failure.

The Read Problem

Most data quality tooling is designed around the assumption that you control the data pipeline end to end. Schema validation at the point of entry, constraint enforcement in the database, type checking in the application layer — these are all "quality on write" techniques, and they work well when you are the author of the data. The difficulty arises when you are the reader rather than the writer.

In modern data architectures, the proportion of data that arrives from sources you do not control is substantial and growing. Third-party feeds, partner integrations, open data portals, scraped web content, legacy system exports, IoT sensor streams, and API responses from services maintained by other teams — all of these represent data that was created according to someone else's assumptions, documented (if at all) according to someone else's standards, and delivered with whatever level of quality the source system happened to produce that day.

You cannot fix the source. In many cases you cannot even influence it. What you can do is understand what you have received, quickly and cheaply, before you attempt to use it. That understanding — structural, not semantic — is the domain of Data Quality on Read.

What Goes Wrong

In working with data platforms across financial services, telecoms, government, and open data projects, we have seen the same categories of data quality failure appear repeatedly. They are worth enumerating because they inform the design of the profiling techniques that follow.

Format inconsistency is the most common. A date column contains values in three or four different formats — 2024-01-15, 15/01/2024, Jan 15 2024, and occasionally just 2024 — because the upstream system aggregated data from multiple sources without normalising it. A phone number column mixes UK mobile (07700 900123), international (+44 7700 900123), US ((555) 123-4567), and free-text entries like "ask for Dave". Each of these is individually valid; the problem is that they coexist in the same column with no indicator of which format applies to which record.

Encoding corruption is subtler and often goes undetected for longer. A file that was encoded in Latin-1 is read as UTF-8, producing garbled characters in names and addresses. A BOM marker at the start of a CSV causes the first column header to parse incorrectly. Control characters — tabs, carriage returns, null bytes — appear in fields that should contain only printable text, breaking downstream parsers that assumed simple delimited input.

Structural drift happens when the shape of the data changes over time without corresponding updates to the documentation or the downstream systems that consume it. A new column is added to a feed, shifting all subsequent field positions. An optional field starts being populated where it was previously always empty, triggering unexpected code paths. A field that was always a single value starts containing comma-separated lists.

Placeholder abuse is endemic. The strings N/A, NULL, none, n/a, -, TBC, unknown, test, and the empty string all appear in production data as substitutes for missing values, each encoded differently, each requiring different handling, and none of them matching the expected format of the field they occupy. In one government dataset we profiled, the placeholder REDACTED appeared in the postcode field of 3% of records, which was useful to know before attempting geocoding.

Population shifts are the hardest to detect without profiling. The data looks structurally correct — all the fields parse, all the types are right — but the distribution has changed. A column that previously had 99.5% population now has 15% nulls because an upstream collection process was turned off. A field that used to contain 8 distinct values now contains 47, because a system migration expanded the code set without updating the documentation.

None of these problems are exotic. They are the ordinary, everyday reality of working with data that someone else created. The question is not whether they exist in your data — they almost certainly do — but whether you have a systematic way of finding them before they cause harm.

The Limits of Traditional Approaches

Before introducing mask-based profiling, it is worth understanding why the existing approaches leave gaps. Not because they are bad — many of them are excellent at what they do — but because they share a common assumption that limits their applicability to the "quality on read" problem.

Schema Validation

The most established approach to data quality is schema validation: define the expected types, formats, and constraints for each field, then reject or flag records that do not conform. This is the approach used by database constraints, JSON Schema, XML Schema (XSD), Avro, Protobuf, and a host of other technologies. It works well in systems where you control the schema and the data is produced by software that respects it.

The limitation is that schema validation requires you to know what the data should look like before you receive it. When you are exploring a new dataset — a third-party feed you have never seen before, an open data portal with sparse documentation, or a legacy system export where the specification was written ten years ago and has not been updated since — the schema is precisely the thing you are trying to discover. Validating against an assumed schema at this stage risks either rejecting valid data that does not match your assumptions, or accepting invalid data that happens to pass your checks by coincidence.

There is also the problem of data that is "technically valid" but semantically broken. A date field containing 0000-00-00 will pass a format check for YYYY-MM-DD but is clearly not a real date. A numeric field containing 999999 will pass a type check but may be a sentinel value meaning "not applicable." Schema validation catches structural violations but tells you nothing about whether the values themselves make sense.

Statistical Profiling

Tools like Great Expectations, dbt tests, and pandas-profiling take a different approach: compute summary statistics for each column (null counts, min/max values, cardinality, distributions, standard deviations) and flag deviations from expected ranges. This is useful for ongoing monitoring of data pipelines where you have a baseline to compare against, and it catches population-level issues (sudden spikes in nulls, unexpected changes in cardinality) that schema validation misses.

The limitation is that aggregate statistics hide structural detail. A column with 99% valid email addresses and 1% phone numbers will report a cardinality, a null rate, and a string length distribution that all look reasonable. The phone numbers — wrong data in the wrong field — will not appear as outliers in any statistical measure because they are structurally similar to emails in terms of length and character composition. You need to see the patterns to spot the problem, and summary statistics do not show patterns.

Statistical methods also require a baseline: they tell you that something has changed, but not what the data looks like in the first place. For initial exploration of an unfamiliar dataset, they give you numbers without context.

Regex-Based Validation

Regular expressions allow precise format validation: a UK postcode matches [A-Z]{1,2}[0-9R][0-9A-Z]? [0-9][ABD-HJLNP-UW-Z]{2}, an email matches a well-known (and notoriously complex) pattern, a date matches \d{4}-\d{2}-\d{2}. When you know exactly what formats to expect, regex validation is powerful and precise.

The limitation is combinatorial. Each expected format requires its own expression. A phone number column that contains UK mobiles, UK landlines, international numbers with country codes, and US-formatted numbers needs at least four regex patterns — and that is before you account for variations in spacing, punctuation, and prefix formatting. For every new format you discover, you write another regex. For every field in every dataset, you maintain a library of patterns. The approach does not scale to exploratory work where you do not yet know what formats exist.

More fundamentally, regex validation is a confirmation technique: it confirms that data matches a pattern you already know about. It does not help you discover what patterns exist in data you have never seen before. Discovery requires a different tool.

What They All Share

All three approaches — schema validation, statistical profiling, and regex-based validation — share a common assumption: you already know what the data should look like. They are verification techniques, designed to confirm expectations. When those expectations are correct and the data is well-understood, they work beautifully.

The gap they leave is in discovery: the initial exploration of unfamiliar data, where you have no schema, no baseline statistics, and no library of expected formats. You need something that will show you what the data does look like, without requiring you to tell it what to look for. That is the role of mask-based profiling.

Schema on Read, Quality on Read

The idea of deferring structural interpretation until the point of consumption is well established in data architecture. In the Hadoop era, it acquired a name — Schema on Read — and it changed how large-scale data platforms were designed. Rather than enforcing a rigid schema at the point of ingest (Schema on Write, the relational database approach), data lakes accept raw data in whatever format it arrives, store it cheaply, and apply structural interpretation only when a consumer reads the data for a specific purpose.

The benefits of Schema on Read are widely understood. Raw data is preserved in its original form, providing provenance and auditability. Multiple consumers can apply different schemas to the same underlying data, supporting different use cases without duplicating pipelines. When schemas change — and they always change — historical data does not need to be reprocessed, because the raw material is still there. The trade-off is that consumers bear the cost of interpretation, but in practice this cost is modest compared to the flexibility gained.

Data Quality on Read (DQOR) applies exactly the same principle to data quality processing. Instead of cleansing, validating, enriching, and remediating data at the point of ingest — which requires perfect upfront knowledge of the data, slows pipeline velocity, and risks overwriting original values with incorrect corrections — DQOR defers all quality processing until the moment the data is actually consumed.

The core workflow is simple:

- Ingest raw data as fast as possible, preserving it exactly as received.

- At read time, profile the data to discover its structural characteristics.

- Generate quality metadata (masks, assertions, suggested treatments) alongside the raw values.

- Let downstream consumers choose which treatments to apply — or to ignore them entirely and work with the original.

The raw data is never overwritten. The quality metadata is never mandated. Consumers see both the original value and the profiler's assessment of it, and they make their own decisions about what to trust. This is a fundamental design choice: suggestions, never mandates.

Why This Matters

There are several practical reasons why deferring quality processing to read time is advantageous, particularly for data received from external sources.

First, it preserves the original data as an immutable audit trail. In regulated industries (financial services, healthcare, government), the ability to trace a derived value back to the exact bytes that were received from the source system is not a convenience — it is a compliance requirement. DQOR provides this by construction, because the raw data is never modified.

Second, it accommodates imperfect knowledge. When you first receive a new data feed, you rarely understand it fully. The documentation may be incomplete, the specification may be out of date, and the actual data may contain patterns that nobody anticipated. If you apply quality rules at ingest time based on incomplete understanding, you risk discarding or corrupting valid data. By deferring quality processing, you give yourself time to learn the data before committing to a treatment strategy — and when your understanding improves, you can reprocess the history without re-ingesting it.

Third, it supports multiple consumers with different quality requirements. A data science team exploring patterns in raw sensor data may want the original values, noise and all. A reporting team feeding a customer-facing dashboard may want aggressively cleaned and normalised data. A compliance team may want to see every record that was flagged as anomalous, with the raw value alongside the flag. DQOR supports all three from the same source, without separate pipelines.

Fourth, it decouples ingest velocity from quality processing. Data acquisition pipelines can focus on reliability and throughput — landing data on the platform as fast as the source can deliver it — without being slowed by the computational overhead of profiling, validation, and remediation. Quality processing happens later, on the consumer's schedule, using the consumer's compute budget. In streaming architectures, where latency matters, this decoupling is particularly valuable.

The Parallel With Feature Stores

For anyone who has worked with machine learning feature stores, the DQOR pattern will feel familiar. A feature store holds pre-computed features alongside the raw data they were derived from, so that model-serving pipelines can retrieve prediction-ready inputs without recomputing them at request time. The raw data is never discarded; the features are additive layers that sit alongside it.

DQOR follows the same structural logic. The raw value is preserved. Quality metadata — masks, assertions, suggested treatments — are generated as additional columns that sit alongside the raw value in the same row. Downstream consumers select the columns they need. Adding a new quality check or a new treatment is just adding another column; it never touches the original data, and it never requires reprocessing existing outputs.

The enhanced output is a nested record format — each field in the original data becomes a JSON object containing the raw value, its masks, and any inferred rules. The flat enhanced format takes this a step further: a flattened key-value pair schema, sourced from nested data (e.g. fieldname.raw, fieldname.HU, fieldname.Rules.is_numeric). Quality metadata travels with the data it describes — no joins, no lookups, no separate tables. We will return to the specific implementation of this pattern in Chapter 9.

Mask-Based Profiling

A mask, in the context of data profiling, is a transformation function applied to a string that generalises the value into a structural fingerprint. The transformation replaces every character with a symbol representing its character class, while preserving punctuation and whitespace. When a column of data is summarised by counting the frequency of each resulting mask — a process commonly called data profiling — it reveals the structural patterns hiding inside the data, quickly and without assumptions.

The basic translation is as follows:

- Uppercase letters (

A–Z) are replaced withA - Lowercase letters (

a–z) are replaced witha - Digits (

0–9) are replaced with9 - Everything else — punctuation, spaces, symbols — is left unchanged

It seems like a very simple transformation at first glance. To see why it is useful, consider applying it to a column of data that is documented as containing domain names. We expect values like nytimes.com. Applying the mask, we get:

232 aaaa.aaa

195 aaaaaaaaaa.aaa

186 aaaaaa.aaa

182 aaaaaaaa.aaa

168 aaaaaaa.aaa

167 aaaaaaaaaaaa.aaa

167 aaaaa.aaa

153 aaaaaaaaaaaaa.aaa

Very quickly, the mask reduces thousands of unique domain names down to a short list of structural patterns — all of which look like domain names, confirming our expectation. But what about the long tail? The rare masks that appear only a handful of times?

2 AAA Aa

1 a.99a.a

1 9a9a.a

There is a mask — AAA Aa — that does not contain a dot, which we would expect in any domain name. This immediately stands out as structurally different from the rest. When we use the mask to retrieve the original values, we find the text BBC Monitoring — not a domain name at all, but a general descriptor that someone has used in a field designed for domain names. In re-reading the GDELT documentation we discover that this is not an error but a known special case, meaning when we use this field we must handle it. Perhaps we include a correction rule to swap the string for the valid domain www.monitor.bbc.co.uk, which is the actual source.

A second example, from real UK Companies House data, shows what happens when a field contains data from the wrong column entirely. The RegAddress.PostTown field — the registered office town — produces dozens of masks at LU grain. The dominant patterns are all legitimate town names: A (single words like READING, 84.2%), A A (two words like HEBDEN BRIDGE, 6.3%), and several hyphenated or abbreviated forms. But in the long tail:

Mask Count Example

A9 9A 14 EH47 8PG

9 A A 32 150 HOLYWOOD ROAD

9-9 A A 10 1-7 KING STREET

9A A 1 2ND FLOOR

9 2 20037

Postcodes in the town field. Street addresses in the town field. A US ZIP code. A floor number. The masks expose column misalignment that no town-name validation rule would detect — because EH47 8PG is a perfectly valid string, just in the wrong column. The mask A9 9A in a town field is diagnostic: towns do not have that structure, but postcodes do. (For the complete field-by-field analysis of this dataset, see the Worked Example: Profiling UK Companies House Data appendix.)

The idea we are introducing here is that a mask can be used as a key to retrieve records of a particular structural type from a particular field. Before we explore that idea further (it leads directly to the concept of masks as error codes, covered in Chapter 7), it is worth understanding the mechanics of the mask itself in more detail.

Why Masks Work

The power of mask-based profiling comes from a simple mathematical property: the mask function is a many-to-one mapping that dramatically reduces cardinality while preserving structural information. A column of ten million customer names might contain two million unique values, but after masking it might contain only a few hundred unique patterns. A column of phone numbers with a million unique values might collapse to a dozen structural formats.

This cardinality reduction is what makes manual inspection feasible. No human can review two million unique names, but anyone can scan a frequency table of two hundred masks and immediately identify the dominant patterns and the outliers. The mask strips away the content (the specific name, the specific number) and reveals the shape (the format, the structure, the encoding).

Consider a customer name column:

| Original Value | Mask |

|---|---|

John Smith | Aaaa Aaaaa |

JOHN SMITH | AAAA AAAAA |

john smith | aaaa aaaaa |

O'Brien | A'Aaaaa |

Jean-Pierre | Aaaa-Aaaaaa |

12345 | 99999 |

N/A | A/A |

From the masks alone, without looking at the values, we can see: most records are capitalised names (Aaaa Aaaaa), some are in all-caps or all-lowercase (normalisation candidates), some contain apostrophes or hyphens (legitimate but structurally distinct), one is numeric (almost certainly an error — a customer ID in a name field), and one is a placeholder. The mask gives us a classification of structural types in a single pass.

A worked example from the French lobbyist registry illustrates this vividly. The first name field (dirigeants.prenom) produces four masks at LU grain:

Mask Count Example

Aa 697 Carole

Aa-Aa 50 Marc-Antoine

Aa Aa 11 Marie Christine

Aa_a 1 Ro!and

The first three are expected: simple names, hyphenated compounds (common in French), and space-separated compounds. The fourth is the standout: Aa_a — one record where Ro!and contains an exclamation mark where the letter l should be. The intended name is Roland, but a data entry error has replaced a letter with adjacent punctuation. No schema would catch this — the field is a valid string. No length check would catch it — six characters is reasonable. But the mask catches it instantly because ! is punctuation, not a letter, and the structural pattern is fundamentally different from every other value in the field. (For the full analysis, see the Worked Example: Profiling the French Lobbyist Registry appendix.)

Prototyping on the Command Line

One of the virtues of mask-based profiling is that it can be prototyped with standard Unix tools in a single line:

cat data.csv | gawk -F"\t" '{print $4}' | \

sed "s/[0-9]/9/g; s/[a-z]/a/g; s/[A-Z]/A/g" | \

sort | uniq -c | sort -r -n | head -20

This extracts column 4 from a tab-delimited file, applies the A/a/9 mask using sed, sorts the results, counts unique masks, and displays the top 20 by frequency. It runs in seconds on files with millions of rows, and the output is immediately interpretable. We open-sourced a more fully-featured version of this profiler — called bytefreq (short for byte frequencies) — originally written in awk, and later rewritten in Rust. The awk version is available for readers who want to understand the mechanics; the Rust version is what you would use in production. Both are discussed in Chapter 10.

The ability to prototype the technique in a one-liner is important not because the one-liner is a production tool, but because it demonstrates that the underlying idea is genuinely simple. There is no machine learning, no complex configuration, no training data. It is a mechanical character-by-character translation followed by a frequency count. The power comes not from the complexity of the method but from the interpretability of the output.

Grain, Scripts, and Character Classes

The basic A/a/9 mask described in the previous chapter works well for ASCII data and covers the majority of use cases in structured data profiling. But real-world data — particularly data sourced from open data portals, international organisations, and multilingual systems — contains characters that the simple ASCII mask cannot adequately describe. Accented characters, CJK ideographs, Arabic script, Devanagari, Cyrillic, Thai, Ethiopic, and increasingly emoji all appear in production datasets, and a profiler that treats them all as "other" is losing information.

This chapter introduces two extensions to the basic mask: grain levels, which control the resolution of the mask, and Unicode-aware character class translation, which extends masking across the full range of human writing systems.

High Grain and Low Grain

A high grain mask preserves the exact length and position of every character in the original value. Every character maps individually, so John Smith becomes Aaaa Aaaaa and Jane Doe becomes Aaaa Aaa. These are two different masks, because the strings have different lengths.

A low grain mask collapses consecutive runs of the same character class into a single symbol. Under low grain masking, John Smith becomes Aa Aa, and Jane Doe also becomes Aa Aa. The two values now share the same mask, because at the low grain level they have the same structure: a capitalised word followed by a space followed by another capitalised word.

The distinction matters because the two grain levels serve different purposes.

Low grain is the tool for discovery. When you first encounter an unfamiliar dataset and want to understand the structural families present in a column, low grain masking collapses millions of unique values into a handful of patterns. A name column that produces thousands of unique high grain masks (varying by name length) might produce only four or five low grain masks: Aa Aa (first name, last name), Aa A. Aa (with middle initial), Aa (single name), 9 (numeric — investigate), and A/A (placeholder). This immediate simplification makes the data comprehensible at a glance.

The effect is dramatic with non-Latin scripts. When profiling Japanese earthquake data from JMA, the hypocenter name field — containing kanji place names of varying length and composition — collapses entirely to a single mask at LU grain:

Mask Count Example

a 78 福島県会津

a_a 1 (compound name with punctuation)

78 of 79 values produce the same mask: a. Every CJK ideograph is classified as a lowercase letter (Unicode category Lo), and low grain collapses consecutive characters of the same class. A four-character name and an eight-character name are structurally identical at this grain. That one exception — a_a, a name containing a punctuation separator — stands out immediately. At HU grain, these 78 records would produce dozens of distinct masks varying by character count. At LU grain, you see the structural family at a glance. (See the Worked Example: Profiling JMA Earthquake Data appendix for the full analysis.)

High grain is the tool for precision. Once you have identified the structural families using low grain, you can drill into a specific family with high grain masking to see the exact formats. For a postcode column, low grain might tell you that most values match AA9 9AA (low grain: A9 9A). High grain will tell you that you have AA99 9AA, A99 9AA, A9 9AA, AA9 9AA, and AA9A 9AA — the five standard UK postcode formats — each with its own frequency, allowing you to verify completeness and detect anomalies.

A subtler example of when HU grain is needed comes from the French lobbyist registry. The title field (dirigeants.civilite) contains M (Monsieur) and MME (Madame). At LU grain, both collapse to A — a single mask covering all 760 values, suggesting perfect uniformity. At HU grain, M produces A and MME produces AAA, cleanly separating the two populations. The LU profile tells you the field is consistently alphabetic. The HU profile tells you there are exactly two formats and what they are. The choice of grain determines the question you are answering. (See the Worked Example: Profiling the French Lobbyist Registry appendix.)

The typical workflow is a two-pass approach: start with low grain to survey the landscape, then switch to high grain to examine specific areas of interest. This mirrors how experienced data engineers actually work — broad scan first, targeted investigation second — and the two grain levels formalise that workflow into the tool.

Unicode Character Classes

The original bytefreq implementation, written in awk and designed for ASCII data, mapped characters byte-by-byte. Each byte (0–255) was assigned a character class based on its position in the ASCII table, and the mapping was deterministic regardless of the encoding of the input. This had the pragmatic advantage of working consistently on any input — including binary data and files with mixed or unknown encodings — because it made no assumptions about what the bytes represented. It was, deliberately, a byte-level tool.

As the world has moved to Unicode, the byte-level approach needed extending. Modern datasets contain text in dozens of scripts, and a useful profiler needs to handle them without requiring language-specific configuration. The current implementations — both the Rust-based bytefreq CLI and the WebAssembly-based DataRadar browser tool — support Unicode-aware masking at two levels, which we call HU (High Unicode) and LU (Low Unicode), extending the high/low grain concept into the Unicode space.

Under Unicode-aware masking, the character class translation uses the Unicode General Category to determine how each character is mapped:

- Lu (Letter, uppercase) →

A - Ll (Letter, lowercase) →

a - Lt (Letter, titlecase) →

A - Lo (Letter, other — CJK ideographs, Arabic, Thai, etc.) →

a - Nd (Number, decimal digit) →

9 - Punctuation categories (Pc, Pd, Pe, Pf, Pi, Po, Ps) → kept as-is

- Symbol categories (Sc, Sk, Sm, So) → kept as-is

- Separator categories (Zs, Zl, Zp) → kept as-is

This means that a Chinese place name like 北京饭店 (Beijing Hotel) produces a mask of aaaa (four Lo characters, each mapped to a), an Arabic address produces a a a preserving the spaces between words, and an Icelandic name like Jökulsárlón produces Aaaaaaaaaa — preserving the capitalisation structure even though the accented characters are outside the basic ASCII range.

The practical benefit is that profiling works across scripts without configuration. When profiling a global places dataset containing names in Chinese, Thai, Arabic, Cyrillic, Devanagari, Ethiopic, and Latin scripts, the profiler does not need to be told which languages to expect. It uses the Unicode category of each character to generate masks that preserve structure, and the frequency analysis surfaces the dominant patterns regardless of script.

Script Detection

In addition to mask generation, both DataRadar and bytefreq perform automatic script detection per field, reporting the dominant scripts found in each column. This is implemented by examining the Unicode script property of each character and aggregating across all values in the field.

Script detection serves two purposes. First, it flags potential encoding issues: a column that is expected to contain Latin-script names but reports a significant minority of Cyrillic characters may have an encoding corruption (Cyrillic and Latin share visual forms for several characters, and mojibake — text decoded with the wrong character set — often manifests as unexpected script mixing). Second, it informs downstream processing: a column containing mixed Latin and Arabic text may need bidirectional text handling, which is worth knowing before it breaks a downstream rendering system.

Character Profiling

Character Profiling — CP mode — is a complementary technique to mask profiling. Where mask profiling translates each character to its class (A, a, 9) and counts the frequency of the resulting masks, CP mode counts the actual characters — the literal Unicode code points — present in a field. The question it answers is different: not "what structures exist in this data?" but "what characters actually appear in this data?"

This distinction is particularly revealing for non-Latin scripts. When profiling Japanese earthquake data from JMA (the Japan Meteorological Agency), CP mode revealed the presence of full-width digits (0, 1, 2, 3 and so on) alongside standard ASCII digits (0, 1, 2, 3). At LU grain, both full-width and ASCII digits map to 9, so mask profiling alone cannot distinguish them — the masks are identical. CP mode surfaces the actual character inventory, making the mixing of digit forms immediately visible.

CP mode is equally powerful for detecting encoding anomalies. Consider a field that should contain French accented characters — é, è, ê, ç, à — but whose character inventory also includes Â, Ã, or the sequence ©. Those are the telltale signatures of mojibake: UTF-8 byte sequences that have been decoded as Latin-1 (or Windows-1252). The multi-byte UTF-8 encoding of é (0xC3 0xA9) becomes é when misinterpreted as two single-byte Latin-1 characters. The character inventory is the diagnostic — you do not need to write encoding-specific validation rules, because the wrong characters simply show up in the profile.

The practical workflow is straightforward: run mask profiling first to understand the structural patterns in your data, then run CP mode on fields where the character inventory matters. Names, addresses, free-text descriptions, any field where you suspect encoding issues or script mixing — these are the candidates. Mask profiling tells you the shape; CP mode tells you the alphabet.

CP mode output is itself a frequency table — character, count, percentage — ordered by frequency. Like mask profiles, it can be stored as a fact table and monitored over time. If a field that historically contained only Latin characters suddenly shows Cyrillic or CJK code points, that is a data quality event worth investigating. The character inventory becomes a baseline, and deviations from it become signals.

Casing as a Data Quality Signal

The distinction between HU and LU grain is not just about collapsing length — it reveals casing inconsistency. At HU grain, France, FRANCE, france, and FRance produce four different masks: Aaaaaa, AAAAAA, aaaaaa, AAaaaa. At LU grain, the first three collapse to Aa, A, and a respectively — still distinct, still diagnostic. The fourth, FRance, collapses to Aa at LU grain, merging with the title-case form. But the point is that casing variation survives the grain reduction. LU grain does not erase it.

A real example: profiling the country field in the French lobbyist registry (HATVP — the Haute Autorité pour la transparence de la vie publique) revealed four distinct casings of the word "France." There was France (title case, the expected form), FRANCE (all caps), france (all lower), and at least one mixed-case variant. Each produced a different mask. The masks surfaced this inconsistency without any casing-specific validation rules — no regex, no lookup table, no rule that says "this field must be title case." The frequency distribution of masks simply showed that what should be a single pattern was in fact four, indicating data entry from different sources or systems with different conventions.

This generalises to any field where casing should be consistent: country names, status codes, category labels, department names, currency codes. If you profile such a field at LU grain and find multiple distinct masks for what should be a single-format value, you have a casing quality signal. The mask distribution is doing the validation for you. You do not need to define the expected casing in advance — the data tells you, through its masks, whether casing is consistent or not. And because the masks are stored as fact tables, you can track whether casing consistency improves or degrades over time, across loads, across source systems.

The Byte Frequency Approach

It is worth noting that the original byte-level approach — profiling the actual byte values present in a file, without interpreting them as characters — remains useful for a specific class of problem: file inspection. When you receive a file and need to determine its encoding, delimiters, and line endings, byte frequency analysis will tell you what byte values are present and at what frequencies. A UTF-8 file will show characteristic byte patterns (leading bytes in the 0xC0–0xF4 range followed by continuation bytes in the 0x80–0xBF range). A Latin-1 file will show bytes in the 0x80–0xFF range that are not valid UTF-8 sequences. A file with mixed line endings will show both 0x0A (Unix) and 0x0D 0x0A (Windows).

This forensic byte-level analysis is how bytefreq got its name. While the higher-level character class masking is the tool most users will reach for day-to-day, the byte frequency mode remains available for the cases where you need to understand what is in the file before you can even begin to interpret its contents.

Population Analysis

Structure Discovery: The Step Before Profiling

Before you profile the values in a field, you need to know what fields exist and how populated they are. This is structure discovery — the census before the survey. It answers the most basic question about a dataset: what is actually here?

For tabular data — CSV files, fixed-width extracts, database tables — structure discovery means counting non-null values per column. In the UK Companies House dataset (55 columns, 100,000 records), this immediately reveals that DissolutionDate is 0% populated (the extract contains only active companies), that the four SICCode columns cascade from 100% down to 2% (most companies register one industry code; very few register four), and that the PreviousName columns cascade from 11.5% to 0% (most companies have never changed their name, and almost none have changed it ten times). These are significant findings before a single mask is generated.

For nested JSON, structure discovery means walking the tree to find all unique field paths and counting how many records contain each. In the JMA earthquake data (80 events, 2,433 station observations), the field Head.Headline.Information appears in only 10% of records — indicating it is reserved for significant earthquakes that warrant a headline. The field Body.Earthquake.Hypocenter.Area.DetailedName appears at 0% in the sampled data, suggesting it is either deprecated or reserved for a specificity level that none of the sampled events triggered. The structure itself is the first finding.

The population profile of field paths creates a map of the dataset. Fields at 100% are the backbone — they appear in every record and define the core structure. Fields at 0% are dormant or deprecated. Fields between 1% and 50% are conditional — they exist for some record types but not others, and understanding why is often the most valuable insight in the entire analysis. A field that appears in 10% of records is not necessarily poorly populated; it may be correctly populated for the 10% of records where it applies.

For tabular data this computation is trivial: count non-nulls per column, divide by total rows. For nested JSON it requires walking each record's tree and accumulating path presence across the full dataset. Both bytefreq and DataRadar support this as a standard operation, producing a table of field paths sorted by population percentage.

The worked examples in this book follow this pattern: every analysis begins with a structure discovery table showing field paths and population percentages, before any mask profiling begins. You cannot profile what you have not found, and you cannot interpret a population rate without knowing the full landscape of fields around it.

Once masks have been generated for every value in a column, the next step is to count them. The resulting frequency table — a list of unique masks and their occurrence counts — is the population profile of the column, and it is where the real insight lives.

The Power Law of Data

In practice, most columns in structured data follow a power law distribution when profiled by masks. A small number of masks (typically one to three) account for 80 to 95 percent of all values, representing the "expected" formats. A long tail of rare masks accounts for the remainder, representing anomalies, edge cases, errors, and format variations that the documentation did not mention.

The dominant masks tell you what the column is supposed to contain. The long tail tells you what has gone wrong, what has drifted, or what was never documented in the first place. The population profile is, in effect, a structural census of the column.

Reading a Population Profile

Consider a phone number column with one million rows. After masking at high grain, the population profile might look like this:

Mask Count % Cumulative

99999 999999 812,000 81.2% 81.2%

+99 9999 999999 95,000 9.5% 90.7%

9999 999 9999 42,000 4.2% 94.9%

(999) 999-9999 31,000 3.1% 98.0%

aaaa 12,000 1.2% 99.2%

99999999999 4,200 0.4% 99.6%

Aaaa aaa Aaaa 2,100 0.2% 99.8%

(other) 1,700 0.2% 100.0%

This single view tells us more about the phone number column than any schema definition could. We can see that the dominant format is UK mobile (99999 999999, 81.2%), with significant minorities of international numbers, UK landlines, and US-formatted numbers. We can see that 1.2% of values are alphabetic — likely the strings null, none, or similar placeholders masking as aaaa. We can see 0.2% of values that look like names (Aaaa aaa Aaaa), almost certainly data in the wrong field. And we can see that the format without spaces (99999999999) is present but relatively rare, suggesting it might be a data entry variant rather than an error.

None of this required writing a single regex. None of it required a schema. The profiler generated the structural census mechanically, and the interpretation is immediate to anyone who can read the mask notation.

Key Metrics

From the population profile, several metrics are worth computing:

Coverage measures what percentage of values match the top N masks. If the top mask covers 99.9% of values, the column is structurally uniform and easy to process. If the top mask covers only 40%, the column contains significant structural diversity and will require more complex handling. Coverage is a quick indicator of how much work a column will create downstream.

Mask cardinality counts the number of distinct masks in the column. A well-formed date column might have one or two masks. A free-text name field might have hundreds. High mask cardinality suggests either legitimate diversity (names vary in length and format) or structural chaos (multiple unrelated data types in the same column). The distinction is usually obvious from the masks themselves. Note that columns containing non-Latin scripts (as discussed in Chapter 5) tend to have higher mask cardinality at high grain, because CJK, Arabic, and Cyrillic names vary in length just as Latin names do — the structural diversity is real, not an error.

Rare mask frequency identifies masks appearing fewer than N times, or below some percentage threshold of the total population. These are the candidates for investigation. They might be data entry errors, format migrations (records from an old system using a different format), encoding problems, or legitimate edge cases. The threshold is domain-dependent — in a million-row dataset, a mask appearing 10 times is probably an anomaly, while in a thousand-row dataset it might represent 1% of the data and be a genuine format variant.

Finding the Cliff Point

The metrics above — coverage, mask cardinality, rare mask frequency — describe the shape of the distribution, but they do not tell you where to draw the line between "normal" and "investigate." The cliff point does.

Take the sorted mask frequency table and calculate one additional column: the percentage of previous mask. For each mask in the list, divide its count by the count of the mask immediately above it. The first mask has no predecessor, so start with the second.

Returning to our phone number example:

Mask Count % of Previous

99999 999999 812,000 —

+99 9999 999999 95,000 11.7%

9999 999 9999 42,000 44.2%

(999) 999-9999 31,000 73.8%

aaaa 12,000 38.7%

99999999999 4,200 35.0%

Aaaa aaa Aaaa 2,100 50.0%

(other) 1,700 81.0%

Walking down the list, look at how the percentage-of-previous behaves. From position two onwards, the ratios are relatively stable — each mask is some reasonable fraction of the one above it, reflecting the gradual decline you would expect in a power law distribution. But in many real-world columns, there is a point where this ratio drops sharply. The count might go from 12,000 to 400 — a percentage-of-previous of 3.3% — where the preceding steps were in the 30-70% range.

That sharp drop is the cliff point. Everything above it is part of the expected population — patterns that are either correct or wrong in ways you have already accounted for. Everything below it is the exception zone: masks so rare relative to the population above them that they warrant individual inspection.

This is management by exception applied to data quality. Rather than reviewing every mask in a column, the cliff point tells you where to focus your attention. Above the cliff: normal operations. Below the cliff: the review queue.

The masks below the cliff point become a structured work list. For each one, the question is the same: does this pattern represent a new assertion rule that the profiler should learn, or a treatment function that downstream consumers need? A mask like 99-99-9999 appearing twelve times in a column of 9999-99-99 dates might indicate an American-format date that needs a treatment function to reorder the components. A mask like AAAA appearing three times might be the string NULL written literally, needing a rule to flag it as a placeholder. Each exception either produces a new rule, a new treatment, or a documented decision to accept the anomaly — and the cliff point is what surfaced it for review in the first place.

A Real Example: UK Postcodes in Companies House Data

To see the cliff point in practice, consider a real profiling run against 100,000 company records from the UK Companies House public dataset. The RegAddress.PostCode field — the registered office postcode — produces the following HU mask frequency table:

Mask Count % % of Previous

AA9 9AA 38,701 38.4% —

AA99 9AA 35,691 35.4% 92.2%

A99 9AA 7,900 7.8% 22.1%

A9 9AA 5,956 5.9% 75.4%

AA9A 9AA 5,378 5.3% 90.3%

(empty) 4,367 4.3% 81.2%

A9A 9AA 1,967 2.0% 45.0%

AA999AA 7 0.0% 0.4% ← cliff point

AA99AA 5 0.0% 71.4%

99 999 2 0.0% 40.0%

9999 2 0.0% 100.0%

A9 9AA 2 0.0% 100.0%

AA9 9AA. 2 0.0% 100.0%

AA99 9 AA 1 0.0% 50.0%

A99A 9AA 1 0.0% 100.0%

AAAAA 9 1 0.0% 100.0%

...and 14 more singletons

The first seven rows — the five standard UK postcode formats (AA9 9AA, AA99 9AA, A99 9AA, A9 9AA, AA9A 9AA), empty values, and the sixth format (A9A 9AA) — account for 99.96% of all records. The percentage-of-previous ratios in this zone are all between 22% and 92%, reflecting the natural variation in how common each postcode format is.

Then between A9A 9AA (1,967 records) and AA999AA (7 records), the count drops from nearly two thousand to single digits. The percentage-of-previous plummets to 0.4%. That is the cliff.

Below the cliff, every mask is a data quality issue worth inspecting:

AA999AAandAA99AA— valid formats with the space missing (GU478QN,CH71ES). Treatment: insert the space.99 999— a numeric value (20 052), clearly not a UK postcode. Likely a foreign postal code or data in the wrong field.A9 9AA— extra spaces (M2 2EE...), with trailing dots. Treatment: normalise whitespace, strip trailing punctuation.AA9 9AA.— trailing full stop (BR7 5HF.). Treatment: strip punctuation.AA99 9 AA— extra space in the outward code (SW18 4 UH). Treatment: normalise to standard format.AAAAA 9— not a postcode at all (BLOCK 3). An address fragment in the wrong field.A_A9 9AA— contains a semicolon (L;N9 6NE). Data entry error; likelyLN9 6NE.9A AAA— inverted format (2L ONE). Not a postcode.

Each exception below the cliff either produces a treatment function (strip the trailing dot, normalise spacing, insert the missing space) or a flag for manual review (the numeric values, the BLOCK 3, the inverted formats). The cliff point surfaced all of them mechanically, without writing a single postcode-specific validation rule.

In practice, the cliff point is not always a single dramatic drop. Some columns have a gradual slope with no obvious cliff — these are columns with genuine structural diversity (free-text fields, for example) where management by exception is less useful. Others have a razor-sharp cliff after the second or third mask, where 99% of the data conforms to two or three formats and everything else is noise. The clarity of the cliff point is itself diagnostic: a sharp cliff means the column has strong structural conventions; a gentle slope means it does not.

Population Checks

A separate but related technique is the population check, which tests whether each field is populated or empty. This is implemented as a special mask that returns 1 if a field contains a value and 0 if it is null or empty. When aggregated, it produces a per-field population percentage.

Population checks are a basic hygiene measure but surprisingly revealing. A field that is documented as mandatory but shows 15% empty values indicates a data collection problem. A field that was previously 99.5% populated but has dropped to 80% suggests an upstream process change. A field that is 100% populated is either genuinely complete or has been backfilled with placeholders — and the mask profile of that field will tell you which.

When we built our reusable notebook for profiling data in Apache Spark, we included POPCHECKS as a standard mask alongside the ASCII high grain and low grain profilers, precisely because population analysis is so consistently useful as a first-pass check. The graphical output — a stacked bar chart showing populated versus missing values per field — is one of those visualisations that instantly tells you the shape of a dataset before you look at a single value.

Progressive Population

Some fields do not have a fixed population rate — they fill over time. In the French lobbyist registry (HATVP), financial disclosure fields such as expenditure, revenue, and employee count start empty for newly registered organisations and populate progressively as annual reporting periods pass. A field that is 60% populated today may be 80% populated next year — not because data quality improved, but because more reporting periods have elapsed. The data was never missing; it simply did not exist yet.

This means a single population snapshot can be misleading. A field at 40% populated might look sparse, but if the dataset covers five years of registrations and only three years of financial reporting are required, 40% is exactly what you would expect. The population rate must be interpreted in the context of the data's temporal structure. Without that context, you risk raising false alarms about fields that are behaving exactly as designed.

When monitoring population rates over time (as described in the Quality Monitoring chapter), progressive population creates a naturally rising baseline. Distinguishing "population increased because more time has passed" from "population increased because a data collection issue was fixed" requires understanding the business process behind the data. The population profile surfaces the question; domain knowledge answers it. This is a recurring theme in data quality on read: the profiler finds the pattern, but only someone who understands the domain can say whether the pattern is correct.

Wildcard Profiling

When the same field name appears at multiple levels of a nested structure, we can profile them collectively using a wildcard pattern. A query like *.Name gathers every Name field regardless of its position in the hierarchy, producing a single combined profile across all matching paths.

In the JMA earthquake data, Name appears at multiple nesting levels: Body.Earthquake.Hypocenter.Area.Name (the earthquake epicentre region), Body.Intensity.Observation.Pref.Name (the prefecture), Body.Intensity.Observation.Pref.Area.City.Name (the city), and Body.Intensity.Observation.Pref.Area.City.IntensityStation.Name (the individual monitoring station). Profiling *.Name collectively reveals whether the same character set and structural patterns are used consistently across all levels — or whether different nesting contexts use different conventions. If station names use Latin characters while prefecture names use kanji, the wildcard profile will show both populations in a single view.

This extends across datasets. If postcodes appear in multiple nested structures — billing address, shipping address, registered office — profiling *.PostCode shows all postcodes regardless of context. When the aggregate profile reveals anomalies, you drill into individual paths to localise the issue. The wildcard gives you the overview; the specific path gives you the detail.

Wildcard profiling is particularly powerful for cross-cutting consistency checks: verifying that all date fields across a dataset use the same format, that all name fields share the same casing conventions, or that all identifier fields have the same structural pattern. It turns field-by-field analysis into a dataset-wide consistency check, catching format drift that would be invisible when examining one field at a time.

The Two-Pass Workflow

Combining population analysis with mask profiling gives us a general workflow for exploring any structured dataset:

- Run population checks to understand which fields are populated and which are sparse.

- Run low grain mask profiling to identify the structural families in each populated field.

- Review the long tail of rare masks to identify anomalies and potential quality issues.

- Drill into specific fields with high grain masking where precision matters (postcodes, phone numbers, dates, identifiers).

- Document the dominant masks as the "expected" formats for each field.

This workflow takes minutes on a modestly sized dataset (up to a few hundred thousand rows) and scales to millions of rows with the CLI tool or billions with the Spark engine. The output — a per-field summary of structural patterns — is the foundation on which the rest of the DQOR process is built: masks as error codes, treatment functions, and the flat enhanced format that ties it all together.

Masks as Error Codes

The idea introduced in the profiling chapter — that a mask can be used as a key to retrieve records of a particular structural type — leads to what is perhaps the most important insight in the entire DQOR framework: every mask is an implicit data quality error code.

If you know what masks are "correct" for a column, then every other mask is an error. And unlike a generic boolean flag ("valid" or "invalid"), the mask itself tells you what kind of error it represents. The mask 99999 appearing in a name column does not just say "this is wrong" — it says "this is a numeric value where text was expected." The mask A/A does not just say "this fails validation" — it says "this is a two-character abbreviation with a slash, probably a placeholder like N/A." The mask is the diagnosis.

This thinking leads to the following conclusion: we can create a general framework around mask-based profiling for doing data quality control and remediation as we read data within our data reading pipeline. This has some advantageous solution properties that are worth setting out explicitly.

Allow Lists and Exclusion Lists

The simplest way to operationalise masks as error codes is through allow lists and exclusion lists.

An allow list defines the acceptable masks for a column. Any value whose mask does not appear in the allow list is flagged as an anomaly. For a UK postcode column, the allow list might contain:

A9 9AA

A99 9AA

A9A 9AA

AA9 9AA

AA99 9AA

AA9A 9AA

These six masks cover all valid UK postcode formats. Any value that produces a different mask — aaaa (lowercase text), 99999 (numeric), A/A (placeholder), or an empty string — is automatically flagged, and the mask tells you exactly what structural form the offending value takes.

An exclusion list takes the opposite approach: it defines masks that are known to be problematic, and flags any value that matches. This is useful when the set of valid formats is large or open-ended (as with free-text name fields), but certain structural patterns are reliably indicative of errors:

9999 → numeric value in a text field

→ empty string (zero-length value)

a → single lowercase character

aaaa://aaa.aaa → URL in a name field

This is not a theoretical exercise. In the UK Companies House profiling (see the Worked Example appendix), the RegAddress.PostCode field at LU grain produces just two dominant masks — A9 9A (88.3%, e.g. L23 0RG) and A9A 9A (7.3%, e.g. W1W 7LT) — which together cover all six standard UK postcode formats when expanded at HU grain. These two masks plus the empty value (4.4%) account for 99.96% of the data. An allow list of {A9 9A, A9A 9A, (empty)} at LU grain would instantly flag the remaining 0.04% — records containing missing spaces (GU478QN), trailing punctuation (BR7 5HF.), embedded semicolons (L;N9 6NE), and values that are not postcodes at all (BLOCK 3, 2L ONE). The allow list is three entries. The error detection is comprehensive.

In practice, allow lists are more useful for format-controlled fields (postcodes, phone numbers, dates, identifiers) where the set of valid patterns is finite and known. Exclusion lists are more useful for free-text fields where the valid patterns are diverse but certain structural types are reliably wrong.

Building Quality Gates

The combination of population analysis and mask-based error codes creates a natural quality gate for incoming data:

- Profile the column using mask-based profiling at the appropriate grain level.

- Compare each mask against the allow list (or exclusion list) for that column.

- Check population thresholds — is the proportion of "good" masks above the minimum acceptable level? Has a previously rare "bad" mask suddenly increased in frequency?

- Route errors by mask — different masks may require different handling. A placeholder (

A/A) might be replaced with a null. An all-caps name (AAAA AAAAA) might be normalised to title case. A numeric value in a name field (99999) might be quarantined for manual review.

The French lobbyist registry provides a concrete example of routing by mask. The director's role field (dirigeants.fonction) produces masks that reveal three casing conventions in use: Aa Aa for title case (Directeur Général, 92 records), Aa a for French grammatical case (Directeur général, 74 records), and A A for uppercase (DIRECTEUR GENERAL, 29 records). A quality gate on this field would not flag any of these as errors — they are all valid role descriptions. But it would route each casing variant to a normalisation function, ensuring that downstream analytics do not create three separate categories for what is semantically the same role. The mask is not just an error detector; it is a router. (See the Worked Example: Profiling the French Lobbyist Registry appendix.)

The quality gate can run automatically on every new batch of data, providing a continuous structural health check. When the profile of incoming data drifts — a new mask appears that was not seen before, or the population of a known-bad mask increases — the gate flags it for investigation.

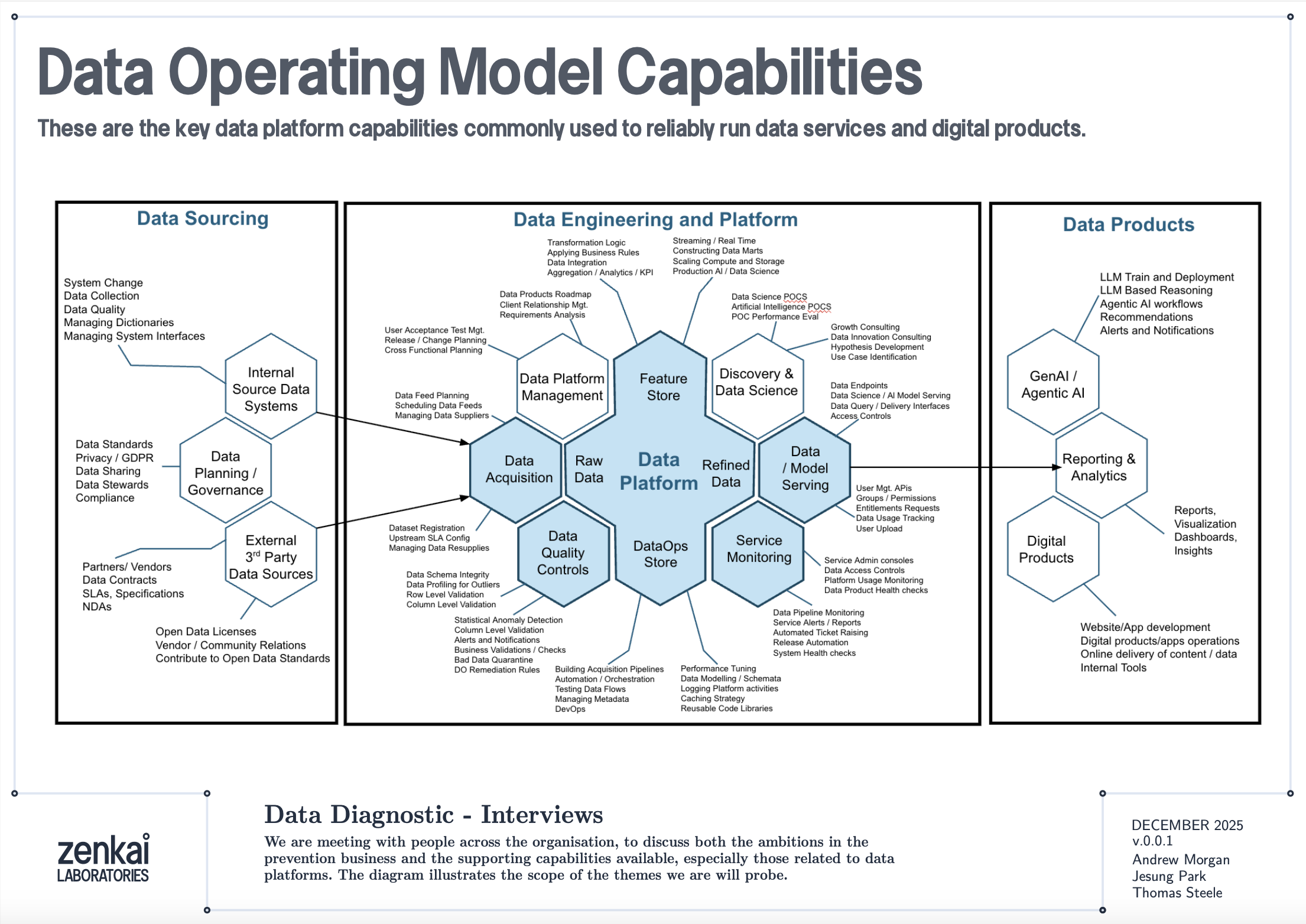

This approach maps directly to the Data Quality Controls capability described in enterprise data operating models, where dataset registration, profiling for outliers, column-level validation, alerts and notifications, bad data quarantine, and DQ remediation rules are all core components. Mask-based profiling provides a single mechanism that addresses all of these capabilities, because the mask itself serves as the registration key, the outlier detector, the validation check, the alert trigger, the quarantine criterion, and the remediation lookup key — all from one pass over the data.

Masks as Provenance

There is a secondary benefit to treating masks as error codes that is easy to overlook: they provide provenance for quality decisions. When a downstream consumer asks "why was this record flagged?" or "why was this value changed?", the mask provides a clear, reproducible answer. The record was flagged because its mask was 99999 and the allow list for the name column does not include numeric masks. The value was changed because its mask was AAAA AAAAA and the treatment function for that mask is title-case normalisation.

This audit trail is built into the mechanism by construction. No additional logging or documentation is required — the mask is both the detection method and the explanation. In regulated environments where data lineage and transformation justification are compliance requirements, this property is particularly valuable.

Text-Encoded Numeric Ranges

A particularly instructive pattern occurs when numeric data is encoded as text ranges rather than as actual numbers. In the French lobbyist registry (HATVP), the expenditure field contains values like 50000 à 99999 euros and 10000 à 24999 euros. These are not numbers — they are text descriptions of numeric bands. The mask at HU grain is something like 99999 a 99999 aaaaa, which clearly reveals the structure: digits, then the French word "à", then more digits, then a unit label.

This is a mask-as-error-code in a subtle sense. The mask is not "wrong" — the data faithfully represents what was reported. But the mask tells you that this field cannot be aggregated numerically without transformation. You cannot sum these ranges, compute averages, or join them to numeric thresholds. The mask diagnoses the field as requiring a treatment function that either extracts the midpoint, maps the range to a numeric band code, or flags it for domain-specific interpretation.

This pattern generalises beyond French expenditure data. Survey responses ("18-24 years", "25-34 years"), salary bands ("£30,000-£39,999"), and classification ranges ("Category A-C") all encode numeric information as text. Mask-based profiling surfaces these immediately because the structural fingerprint — digits mixed with letters and delimiters — is visually distinct from either pure numeric or pure text fields. The mask doesn't just flag the anomaly; it tells you the exact encoding scheme being used.